The return on investment is that this alliance has received 180000 downloads in two weeks of open source, and domestic large models lack high-quality corpus actions | member | domestic

In November 2022, ChatGPT emerged, ushering in the era of big models. But training large models is like cultivating children, only high-quality education can produce high-quality output. Therefore, high-quality corpora are a key link in the large model industry chain. Based on this, on July 6th this year, at the opening ceremony of the World Artificial Intelligence Conference, the China Big Model Corpus Data Alliance, jointly initiated by Shanghai Artificial Intelligence Laboratory and other units, was announced to be established. Subsequently, the alliance took frequent actions. Following the release of the first publicly available multimodal pre training corpus "Scholar · Ten Thousand Volumes" on August 14th, nine new member units joined and new datasets were released on September 8th.

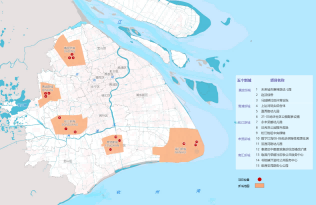

It is reported that the alliance has gathered 10 initiators in the first batch, including Shanghai Artificial Intelligence Laboratory, China Central Radio and Television Station, China Institute of Science and Technology Information, Shanghai Newspaper Group, Shanghai Data Group, etc., which includes the main force and vanguard of national and Shanghai language data supply. On August 14th, the Alliance released the "Scholar · Ten Thousand Volumes" multimodal pre training corpus, with a total data volume of over 2TB, including over 500 million texts, 22 million interlaced text and image documents, and 1000 program videos. Wang Yanfeng, Assistant Director of Shanghai Artificial Intelligence Laboratory, introduced that the 2TB data is the result of strict screening. The laboratory has established an OpenDataLab technology platform for this purpose, which has a large number of professional toolsets. Through classification, cleaning, identification and other methods, it helps to eliminate non high-quality and contaminated data, achieving an improvement in corpus from quantity to quality. Within two weeks of its release, "Scholar · Ten Thousand Volumes" reached 180000 downloads, setting the record for the highest number of downloads of individual datasets publicly released since the rise of large-scale models in China.

The high-quality corpus "really fragrant" has also attracted more units to join the "feeding". This time, 9 units including Shanghai Timi Robotics Co., Ltd., Shanghai Urban Construction and Urban Operation Co., Ltd., China Patent Technology Development Corporation, Shanghai Arbitration Commission, and Shanghai Data Exchange have all joined the company and launched the second batch of open-source language dataset "Honey Nest · Pollen 1.0". Multiple alliance member units have also formed open-source solutions for corpus data, which will gradually enter the release queue.

Honey Nest · Pollen 1.0 comes from Shanghai Mido Information Technology Co., Ltd., one of the nine new members. The Chief Technology Officer of the company, Liu Yidong, told reporters that many large models in China are trained based on foreign language data combined with a small amount of Chinese materials, which leads to weak understanding ability of Chinese and insufficient generation ability based on Chinese scenes. Honeycomb · Pollen 1.0 is mainly based on Internet media data. After fine processing such as filter cleaning, multi condition de duplication, and pre review of data compliance by senior lawyers, the total number of open source Chinese data has exceeded 70 million. In fact, the series of large models of Mido Company itself have also been trained using the Mido Pollen dataset, which can be used in vertical fields such as government and media, providing services such as knowledge Q&A and content generation, automatic generation of analysis reports, content review and editing of documents.

Alliance members actively contribute corpus, but it is not just about generating power with love. Wang Zhijia, Director of the Artificial Intelligence Development Department of the Municipal Commission of Economy and Information Technology, introduced that the alliance has designed four operational models from L1 to L4. L1 is open source to the society, L2 is only open source within the alliance, and L3 and L4 involve projects that are listed or traded on or off the market. "We have also been exploring incentive mechanisms based on contribution and sustainable operation, such as through joint research and development with member units, using scientific research benefits, commercial licenses, etc. to achieve feedback to contributors." Wang Yanfeng said.