Can only expect to "defeat magic with magic"?, Legislation in various countries cannot stop videos | depth | magic

Imagine, you suddenly receive an emergency call from a family member who claims to be in a difficult situation and asks you to send him money. His familiar voice sounded distressed and depressed, even with a crying tone. However, when you remitted money to him, you found out that you had been deceived. This is because the phone you received was not from the family member themselves, but was forged by a scammer using artificial intelligence.

This is not the plot of a science fiction novel. With the rapid application and popularization of artificial intelligence technology, fraud incidents have occurred frequently worldwide in the past two years, causing huge losses. In May of this year, media reported that the police in Baotou City, Inner Mongolia Autonomous Region received a report that fraudsters used facial recognition technology supported by deep synthesis to impersonate a friend of the victim during video calls, claiming that they needed to pay a deposit during the bidding process. After a video call with a friend and hearing their request in person, the victim transferred 4.3 million yuan as requested. The victim did not realize that they had been deceived until their friend expressed ignorance. There are reports that the police have recovered most of the stolen funds and are working hard to track the whereabouts of the remaining funds.

In an increasingly digital world, the rapid development of artificial intelligence deep synthesis technology has not only facilitated life, but also brought various threats due to the misuse of technology. However, the public has not yet gained sufficient awareness and awareness of prevention, and corresponding platform governance and government regulatory measures urgently need to be implemented.

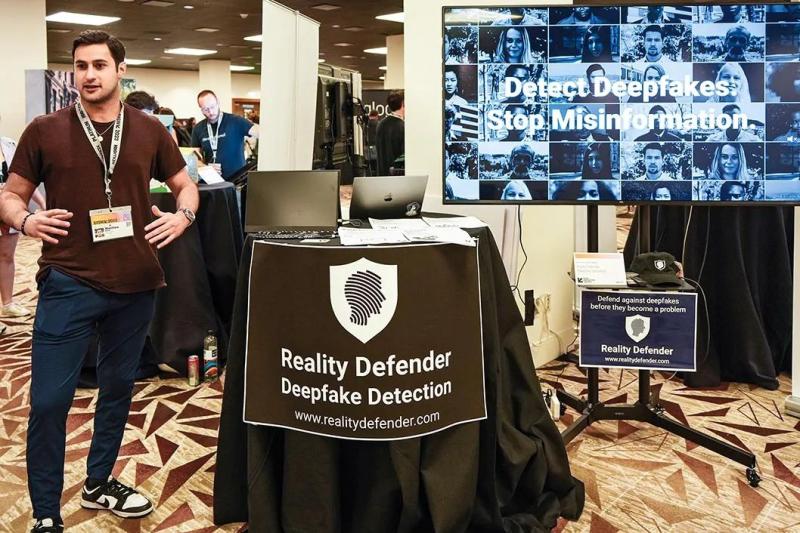

On March 11, at the "South by Southwest" conference and art festival, a staff member is introducing the "Defender of Truth" technology, which can provide online protection for enterprises and entities from fraud and destruction of "deep forgery" and automatic content generation.

1

Deep synthesis technology that combines fake with real

The ability to confuse fake with real is the most important feature of deep synthesis technology. People often say that "hearing is believing, seeing is believing" and "there is truth with pictures", which shows that in the subconscious of the public, photos, videos, and other credibility far outweigh words. Deep synthesis technology has precisely overturned this cognition, as it can be used to forge audio, images, videos, and fabricate the behavior and language of characters.

Deep synthesis technology refers to the use of deep learning, virtual reality, and other synthetic algorithms to create information such as text, images, audio, video, and virtual scenes. In recent years, deep synthesis technology has been widely applied in fields such as film and television, media, and education, achieving functions such as special effects production, metaverse scene construction, and virtual simulation teaching. With the popularization of various commercial products, a large number of deeply synthesized information content has emerged on the internet, allowing more and more society to be exposed to deep synthesis technology.

Deep synthesis services have also been made public and accessible to the public. Ordinary people only need a small amount of sample data such as images, audio, video, and text. By using easy-to-use deep synthesis tools, they can achieve the goal of blurring the boundaries between real and false information. At the beginning of the birth of deep synthesis technology, due to the immaturity of the technology, there were problems such as unnatural micro expressions of characters and jagged edges in synthesized videos. However, in recent years, the depth of synthesized video content disseminated online, the realism and naturalness of character movements, as well as the overall clarity and smoothness of videos, have reached a level of authenticity, making it difficult for users to distinguish.

The demand scenarios for deep synthesis technology are becoming increasingly diverse and their applications are becoming more mature. In September 2019, a mother sought help from Alibaba's artificial intelligence laboratory, hoping to turn her 14-year-old daughter who had passed away from cancer into interactive software. Three months later, the laboratory helped the mother synthesize a 20 second speech of her daughter and store it in Tmall Genie. This mother believes that this is a new healing method for families who have lost their only child, similar to travel and psychological counseling. Among the 166 responses to the question "Can you accept the way a lost only mother turns her daughter into an AI and leaves her beloved behind?" on Zhihu, over 70% of netizens hold an accepting attitude.

But as the threshold for deep synthesis content production technology becomes lower and lower, it is often used as a tool for fraud. In October 2021, Forbes magazine reported according to a court document that fraudsters impersonated customers with deep synthetic voice and contacted a bank manager in the United Arab Emirates by phone, claiming that the company was about to make an acquisition and needed bank approval for a $35 million transfer. The bank manager confirmed the transfer amount and the receiving account before making the transfer. Subsequently, the stolen funds were transferred to bank accounts around the world.

2

Gradually establishing a regulatory mechanism for deep synthesis governance

Once the deep synthesis technology is abused, it can cause huge risks and substantial harm, which may cause personal and property damage such as portrait and reputation to individuals and enterprises, as well as pose a huge threat to social order, national political stability, and security.

For example, using deep synthesis technology to fabricate statements of national politicians and fake videos of civil servants may pose significant risks to political and national security. After the escalation of the Ukrainian crisis in early 2022, a composite video of Ukrainian President Zelensky was widely circulated. In the video, Zelensky called on Ukrainian soldiers to lay down their weapons. Because this video has been widely circulated on Twitter, with over 250000 viewers, Zelensky himself has also tweeted to refute the rumors. Claire Wardell, one of the leaders of the Information Future Laboratory at Brown University in the United States, said, "This situation keeps happening, reminding people how easy it is to fool people."

For individuals and businesses, the misuse of deep synthesis technology may also cause significant harm to their rights and interests. For example, if corporate executives are deeply synthesized, fabricated videos, or tampered with speech content, it may cause market panic and trigger financial market turbulence; Individuals may be exposed to fake videos, which fraudsters use to defraud their relatives and friends, or to spread and even earn illegal profits by fabricating their indecent videos, resulting in damage to their personal reputation rights.

In response to the opportunities and challenges caused by malicious use of deep synthesis services, countries around the world have introduced relevant laws and regulations to explore legal governance paths. Both the EU and the United States have relevant legislative considerations. At the same time, they should strengthen the application of digital watermarking technology, develop authenticity identification technology and other governance measures, and require Internet enterprises to conduct self review of platform content to combat false network content from the source.

Since 2020, China has gradually established a governance and regulatory mechanism for deep synthesis technology. On the one hand, we must strengthen the supervision of information content and require that no synthetic false news information be generated, and deep synthesis technology cannot be used to engage in activities prohibited by laws and regulations. The Civil Code, which came into effect in 2021, clearly stipulates in the Personality Rights Code that no one shall use information technology to forge the portrait or voice of others, whether for profit or not. The Administrative Provisions on Recommendation of Algorithms for Internet Information Services, which will be implemented on March 1, 2022, clearly requires that synthetic false news information shall not be generated.

On the other hand, China has put forward a series of requirements for deep synthesis technology providers and information publishers. Supporters of deep synthesis technology are required to consider potential risks and hazards when developing, testing, and training related technologies, and fulfill corresponding security assessment responsibilities and data security management obligations. For information publishers and transmitters, it is required that they annotate deeply synthesized content during publication and dissemination. The Administrative Provisions on the Deep Integration of Internet Information Services, which came into effect on January 10 this year, also has detailed provisions on the above requirements.

It will take some time from legislation to regulatory implementation. Although countries around the world have cracked down on the abuse of deep synthesis technology through legislation, technological governance, and other means, with the support of artificial intelligence technology, a massive amount of fraud information from deep synthesis is flooding the general public, and the public has not yet established relevant prevention awareness and awareness.

3

"Defeat magic with magic"

With the development of deep synthesis technology, it has become increasingly difficult to distinguish true and false content. This erosion of trust will have a profound impact on various fields such as news dissemination and daily social interaction, and also pose new challenges to people's survival in the digital society - to change the underlying perception of "seeing is believing" and to seek new ways to establish trust.

How to deal with the trust crisis in cyberspace and the real world caused by deep synthesis technology? Ordinary people should start by protecting themselves. Firstly, it is necessary to treat personal information with caution and avoid sharing sensitive personal information on the internet, especially social media platforms, which may be detrimental to oneself or result in identity theft if leaked. When seeing news or information on the internet, try to find multiple reliable sources for cross referencing and verification. It is important to verify the credibility of the sources, especially for those sensational or unverified claims, and hold a skeptical attitude.

Secondly, handle personal finances more cautiously. For example, personalized emails may deceive you into revealing sensitive information, so be vigilant when actively requesting personal or financial information. Those who use computers, mobile phones, and networks to process financial information should regularly update their operating systems, web browsers, and security software.

Finally, it may be necessary to trust one's intuition more. If you feel that something is not really good or looks suspicious, trust your intuition. If it is not certain enough, repeatedly verify the reliability of the information source, rather than relying solely on information from calls and videos.

In the future, it may be even more necessary for scientists to actively develop artificial intelligence technology that uses magic to defeat magic. For example, the white paper titled "Using Model Models to Combat Fraud" jointly released by NetEase and KPMG discusses the artificial intelligence anti fraud engine jointly developed by both parties. This anti fraud engine can reduce fraudulent transactions by 40% on the basis of existing artificial intelligence anti fraud measures. In the digital age, human expertise needs to collaborate with artificial intelligence technology to actively provide fraud prevention services and improve their accuracy.